Ever since the beta release of GitHub’s Copilot for vim, I’ve been an avid user,

and I can confidently say it has significantly enhanced my coding experience.

Another game changer was the integration of the open interpreter, which offers the unique capability to chat with an LLM right in the midst of coding inside of vim.

This combination has transformed my workflow: vim’s agility in handling code blends seamlessly with the open interpreter’s real-time interactions.

The fusion of these tools not only speeds up my coding process but also elevates the overall experience. It’s genuinely a dynamic pairing for any developer.

Incarnation.ai

At incarnation.ai we imagine and build the most interesting AI platform, your digital incarnation.

What is incarnation.ai?

INCARNATION.AI is a virtual incarnation of an entity or person that embodies their character, spirit or quality. It uses AI and machine learning to help with reasoning and decision-making to create an entity that eventually can go even beyond the capabilities of their incarnation.

Applications of INCARNATION.AI are many, below are some examples:

- A CEO uses his virtual incarnations to be highly effective and participate in parallel meetings, while still being informed by the AI about key decisions to be made.

- Organizations can define a virtual incarnation that represents their values as defined in their mission statements and create an incarnation that will act and reason accordingly.

- In the physical world, incarnations embodied by robots will be able to represent that person or entity in certain business as well as personal settings.

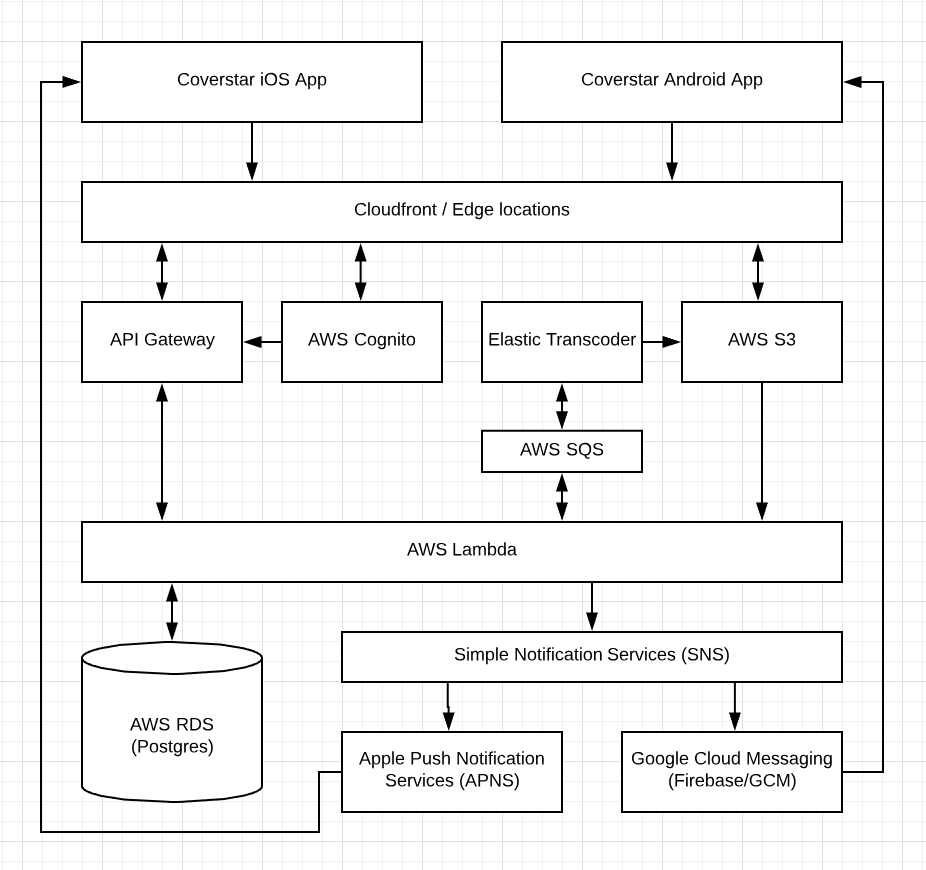

Creating the Coverstar Kpop Video Social Network

The experience of building the Coverstar App, a kpop video social network app, was an exciting challenge. As a small startup with limited manpower and financial resources available, choosing the right technology stack was extremely important.

The backend platform needed to be scalable, cost efficient and capable of delivering high performance video playback. With a frontend MVP iOS app in place, and a plan for an initial social network release within 3 months, the technology stack needed to allow for rapid development.

Serverless technology (e.g. AWS Lambda) presented a environment that met the need of the project. The choice to employ serverless technology was a game changer and helped us to move at an unprecedented pace. Compared to working with containers and microservices, the simplicity of writing functions in AWS Lambda, without worrying about provisioning, scaling and auto windup/down to keep cost low, was incredible.

Below is a high level diagram of the Coverstar backend implementation:

RDBMS vs NoSQL

A primary decision that needed to be made was between using a relational database (RDBMS) versus a NoSQL database system. This required us to take a closer look at the nature of the data we needed to store. We predicted that our database system will mainly store user data, video and media metadata and data from interactions between our users (e.g. messages, notifications). The nature of our data would be mostly relational, as opposed to having unrelated data entries.

NoSQL would require storing a range of data redundantly, denormalized, or use a service like Amazon EMR to create relationships. Another factor was that the volatility of the data schema. High volatility would favor a NoSQL database which has no schema requirements. Considering all those factors we decided to opt for a relational database system.

Below is the database schema of the initial release. Since then the number of tables grew considerably.

Adaptive Bitrate Streaming (ABR)

In order to deliver great video playback experience to the global Coverstar community, slow and/or changing network conditions needed to be taken into consideration.

Two of the most popular implementations of ABR streaming are Apple’s HLS and MPEG-DASH. Both of these technologies work by encoding a source video file into multiple streams with different bitrate. Those streams are split into smaller segments usually a few seconds in length. The video player then seamlessly switches between the streams depending on the available bandwidth and other factors. Apple’s recommended segment duration was 6s for HLS streams.

Final thoughts:

Creating a social network backed was a challenging task and tuning the system will be a continuous effort. The services that AWS is providing, including Elastic Transcoder, Lambda, SNS has made it possible even for a small startup to create a competitive video social network. In hindsight we were very happy with the technology choices since the system has been incredibly stable, performant and cost effective.

VIM gives you Super Powers!

Those who know me most likely know that I’m quite a VIM enthusiast.

I love the speed and flexibility of the editor and it’s ubiquitous availability on UNIX systems.

VIM has a steep learning curve but I guarantee you the effort pays off!

If you are starting off learning VIM, I can recommend the game VIM Adventures which is a fun way to learn VIM.

You can view my .vimrc at GitHub.

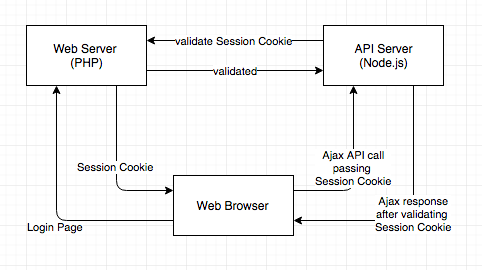

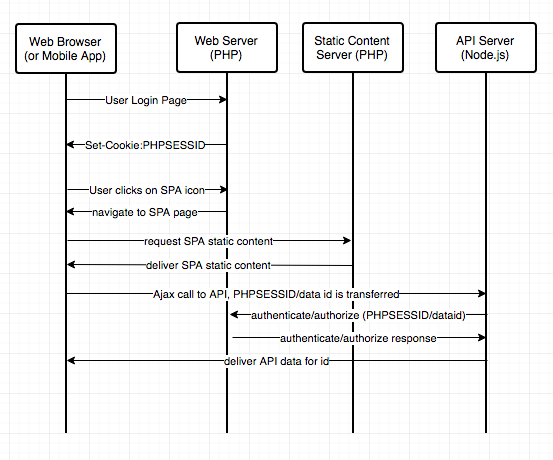

Integrate a Node.js SPA (Single Page Application) with a PHP Web App

I’ve been working on an interesting problem to seamlessly integrate an existing PHP web app with a newly built SPA running on Node.js.

There are many different approaches to solving this problem and this solution is more of a low level implementation, so a certain familiarity with HTTP headers and cookies is required.

This post also doesn’t describe scalability which I might talk about it in a future post.

This post describes:

- How to implement session sharing between PHP and Node.js

- How to authenticate and authorize the RESTFul API calls made from the browser

- How to secure the internal API calls made between the PHP and the Node server

1. How to implement session sharing between PHP and Node.js

Your user logs into the app, providing some credentials and a cookie or token of some sort is returned, which you use to identify that user.

Your AJAX requests to the API server will carry that same logged-in token (PHPSESSID) as before. Then we are checking that token against an internal API on the php server, and restricting the information down to ‘just what the user is allowed to see’.

The important consideration is that RESTful web services require authentication with every request.

The diagrams below shows the interactions between the servers:

2. How to authenticate and authorize the RESTFul API calls made from the browser

Same-origin-policy and CORS

Since the PHP and Node API server are running on different IP addresses the HTTPS requests made using the XMLHttpRequest object are subject to the same-origin policy. This means that HTTP requests could only be made to the domain the page was loaded from.

The CORS (Cross Origin Resource Sharing) mechanism provides a way for web servers running on different IPs or domains to support cross-site access.

Client side code:

1 | $.ajaxPrefilter( function( options, originalOptions, jqXHR ) { |

Node.js code for implementing cors headers:

1 | var https = require('cors'); |

PHP code for verifying the session cookie:

1 | $sid = $_REQUEST["PHPSESSID"]; |

3. How to secure the internal API calls made between the PHP and the Node server

Depending on how strong the security needs to be, there are several approaches securing the internal APIs, some of them are:

-Restricting the IP/PORT to only allow access from the internal servers.

-Using TSL in combination with basic authentication. If the security needs are not as high this is an easy way of implementing security.

-Token based security e.g. oAuth.

-Using Client-authenticated TSL Handshakes, below is a good article:

https://engineering.circle.com/https-authorized-certs-with-node-js-315e548354a2#.sakue1rg6

Vintage Synthesizers

I’ve been playing with the idea of making electronic music again. Back in the 80s I was fascinated by upcoming new bands like Kraftwerk, Depeche Mode, Yello and Front 242.

I loved the new sounds that were possible using analog and digital synthesizers. I started to make music myself with a little Casio K1 Sample keyboard. Later I bought a used Waldorf PRK Processor keyboard which I used with different midi expanders. The PRK keyboard quality was amazing, it had a 68000 CPU and 5 ½’’ floppy drive and was a monster at 84 pounds!

http://www.synthmuseum.com/ppg/ppgprk01.html

My neighbor was borrowing me his Dave Smith Instruments Mopho Keyboard for a couple of weeks:

https://www.davesmithinstruments.com/product/mopho-keyboard/

I loved the powerful crushing basses this thing can produce and the arpeggiator, sequencer, LFO and two oscillators allow for an immense range of sounds.

Other great options are the original Moog synthesizers or a Yamaha DX7, both are classics.

Below is a website that has an immense collection of vintage synthesizers including sounds samples:

http://www.vintagesynth.com/

The AI revolution is starting

Law Firm Hires Ross an AI Layer:

http://mishtalk.com/2016/05/12/law-firm-hires-ross-an-artificial-intelligence-lawyer/

Students didn’t know their TA was a computer:

http://www.news.gatech.edu/2016/05/09/artificial-intelligence-course-creates-ai-teaching-assistant

AI Sales Associate Robots:

http://futurism.com/ibms-watson-powered-sales-associate-robots-to-be-rolled-out-into-u-s-retailers-by-june-2016/

Chatbot Mituki

I just spent a few minutes talking to the 2015 Loebner Prize winner Mitsuku:

At the end I asked her: “What did you learn today?” Mitsuki answered:

“I learned a bit more about human behavior and relationships.”

I thought that was quite a good answer :)

Boston Robotics Atlas Spoof

Funny spoof on the Boston Robotics Atlas video #robotlivesmatter: