The experience of building the Coverstar App, a kpop video social network app, was an exciting challenge. As a small startup with limited manpower and financial resources available, choosing the right technology stack was extremely important.

The backend platform needed to be scalable, cost efficient and capable of delivering high performance video playback. With a frontend MVP iOS app in place, and a plan for an initial social network release within 3 months, the technology stack needed to allow for rapid development.

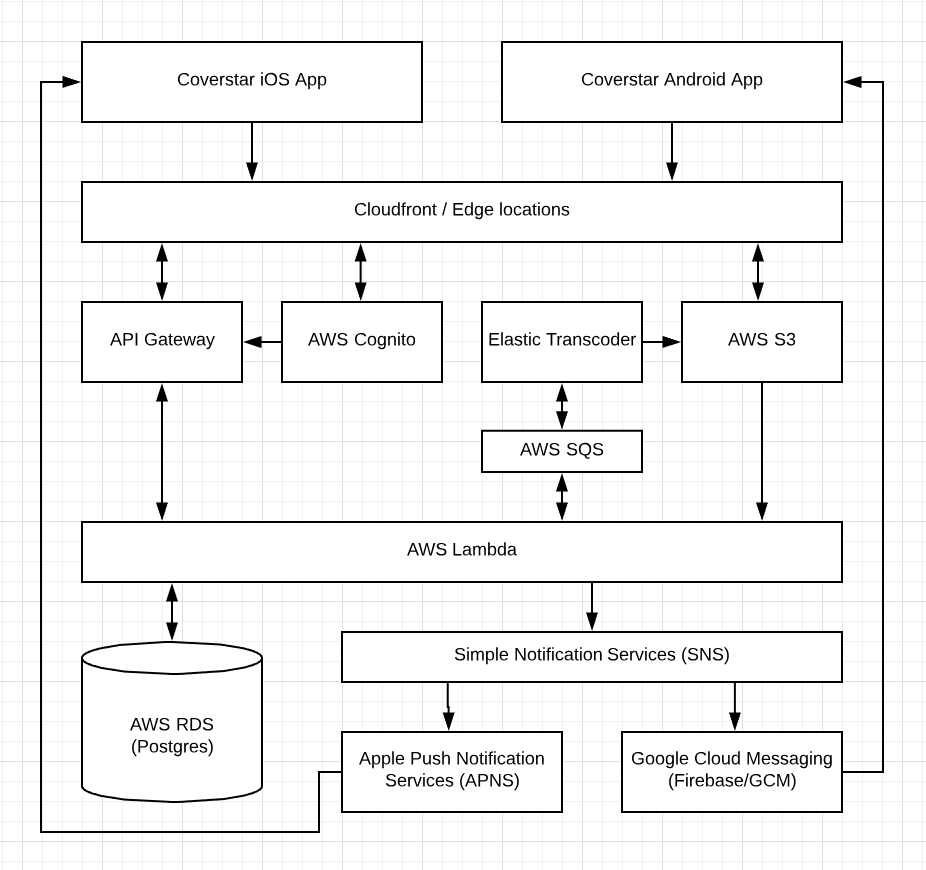

Serverless technology (e.g. AWS Lambda) presented a environment that met the need of the project. The choice to employ serverless technology was a game changer and helped us to move at an unprecedented pace. Compared to working with containers and microservices, the simplicity of writing functions in AWS Lambda, without worrying about provisioning, scaling and auto windup/down to keep cost low, was incredible.

Below is a high level diagram of the Coverstar backend implementation:

RDBMS vs NoSQL

A primary decision that needed to be made was between using a relational database (RDBMS) versus a NoSQL database system. This required us to take a closer look at the nature of the data we needed to store. We predicted that our database system will mainly store user data, video and media metadata and data from interactions between our users (e.g. messages, notifications). The nature of our data would be mostly relational, as opposed to having unrelated data entries.

NoSQL would require storing a range of data redundantly, denormalized, or use a service like Amazon EMR to create relationships. Another factor was that the volatility of the data schema. High volatility would favor a NoSQL database which has no schema requirements. Considering all those factors we decided to opt for a relational database system.

Below is the database schema of the initial release. Since then the number of tables grew considerably.

Adaptive Bitrate Streaming (ABR)

In order to deliver great video playback experience to the global Coverstar community, slow and/or changing network conditions needed to be taken into consideration.

Two of the most popular implementations of ABR streaming are Apple’s HLS and MPEG-DASH. Both of these technologies work by encoding a source video file into multiple streams with different bitrate. Those streams are split into smaller segments usually a few seconds in length. The video player then seamlessly switches between the streams depending on the available bandwidth and other factors. Apple’s recommended segment duration was 6s for HLS streams.

Final thoughts:

Creating a social network backed was a challenging task and tuning the system will be a continuous effort. The services that AWS is providing, including Elastic Transcoder, Lambda, SNS has made it possible even for a small startup to create a competitive video social network. In hindsight we were very happy with the technology choices since the system has been incredibly stable, performant and cost effective.